Try placing hands over your ears when following a conversation. The speech of other people immediately becomes muffled and hard to understand. You might pick some of the words, but you miss many others. Keeping up with the conversation quickly starts to feel tiring and frustrating as you try to guess the missing bits. You feel too embarrassed to continuously ask people to raise their voice or repeat what they have just said. In the end, you just want to give up following the conversation entirely.

To varying degrees, this is the reality the people with hearing loss face every day. Difficult situations crop up everywhere from meetings to noisy restaurants. Repeated hardships often cause people with hearing loss to avoid social situations which impoverishes their quality of life. Having an assistive device always at hand to transcribe conversations could be a big help to many of them.

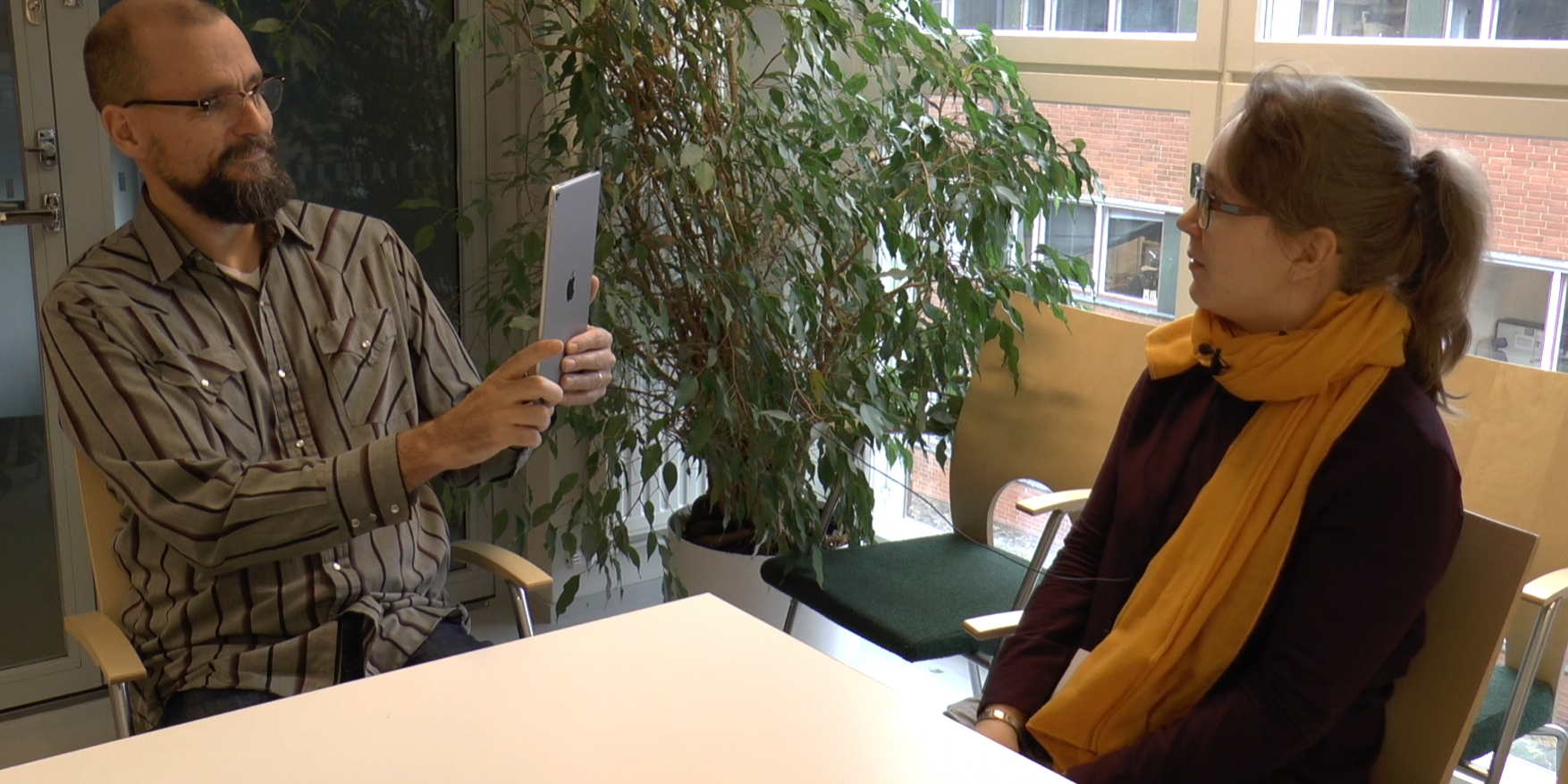

We have developed the Conversation Assistant, an iPad application which uses automatic speech recognition (ASR) to transcribe conversational speech for people with hearing loss at Aalto University. The application has three main functions:

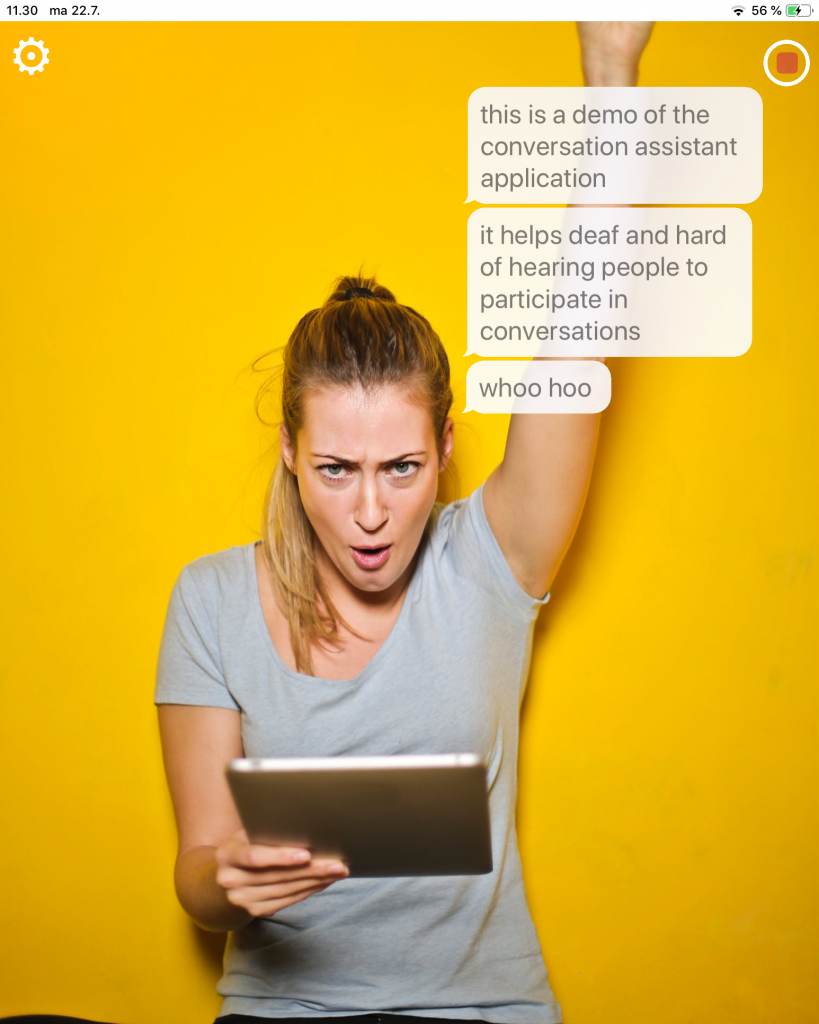

- It displays transcriptions on-screen in speech bubbles, which are placed on top of a video feed from the device camera.

- If the conversation partner is visible in the video, face recognition technology is used to position the speech bubbles next to the head of the conversation partner. This way the user does not have to switch the gaze between the device and the conversation partner, but can continue to see the body language, gestures and the lips of the conversation partner even when they look at the speech bubbles for support.

- The speech recognition itself is performed on a remote server running the latest ASR models from Aalto, since they are still computationally too heavy to run on a mobile device.

A screen capture of the Conversation Assistant user interface.

To collect feedback and see how helpful the Conversation Assistant is to people with hearing loss, we performed user tests with Finnish speaking deaf and hard of hearing. In the tests, participants had one-on-one conversations with a test administrator in noise. The results were positive with several participants noting they would immediately like to adopt the application in their daily lives. The most common request for improvement was naturally accuracy, as even a single recognition error can cause confusion and alter meaning. Another often mentioned feature was speaker recognition. Participants who mentioned this said they can often manage one-on-one conversations with the help of lip reading. To them, Conversation Assistant would be more helpful if it could for example handle group meetings at work or a gathering of friends at a noisy restaurant.

NAACL 2019 conference and SLPAT workshop

We presented the user test results in SLPAT 2019 i.e. the 8th Workshop on Speech and Language Processing for Assistive Technologies. SLPAT covers a wide range of assistive technologies, from speech recognition and synthesis for physical/cognitive impairments and personalized voices to nonverbal communication, brain-computer interfaces (BCI) and clinical applications. Other papers published at the workshop were concerned for example with diagnosing lateral sclerosis from speech, blissymbolics translation and language modeling for typing prediction in BCI communication. The workshop was co-located with NAACL 2019 in Minneapolis, a major natural language processing conference organized by the Association for Computational Linguistics. Here are the top picks from NAACL 2019 papers relevant to the MeMAD project:

- Probing the Need for Visual Context in Multimodal Machine Translation

- Cross-lingual Visual Verb Sense Disambiguation

- Beyond Task Success: A Closer Look at Jointly Learning to See, Ask, and GuessWhat

- The World in My Mind: Visual Dialog with Adversarial Multi-modal Feature Encoding

- VQD: Visual Query Detection in Natural Scenes

- ExCL: Extractive Clip Localization Using Natural Language Descriptions

- CLEVR-Dialog:A Diagnostic Dataset for Multi-Round Reasoning in Visual Dialog

- CITE: A Corpus of Image–Text Discourse Relations

The NAACL 2019 was held at the Hyatt Regency Minneapolis conference hotel.

And what did we learn from this work?

High accuracy and robustness of speech recognition are the most valuable features to the potential users of Conversation Assistant. In the future, we could try improving them, or speaker recognition, using the video feed of the application in combination with the speech audio. For example lip movements and gestures in a video could carry information about who is speaking and what they are saying. Another practical lesson was learned at the conference center, where our application suffered from a significant delay because the data had to travel from US to our server in Finland and back. This demonstrates the main weakness of a server-based design when compared to a on-device speech recognizer.

References

Anja Virkkunen, Juri Lukkarila, Kalle Palomäki and Mikko Kurimo. A user study to compare two conversational assistants designed for people with hearing impairments. Proceedings of the Eighth Workshop on Speech and Language Processing for Assistive Technologies. 2019.

Anja Virkkunen: Automatic Speech Recognition for the Hearing Impaired in an Augmented Reality Application. Aalto University. 2018.

Video demo of the Conversation Assistant

The Conversation Assistant was prototyped by Anja Virkkunen (now in MeMAD) in her M.Sc. project at Aalto University funded by the Academy of Finland in 2018.